Contact Us

15+

Years Experience

1100+

Projects

250+

Clients

150+

Experts

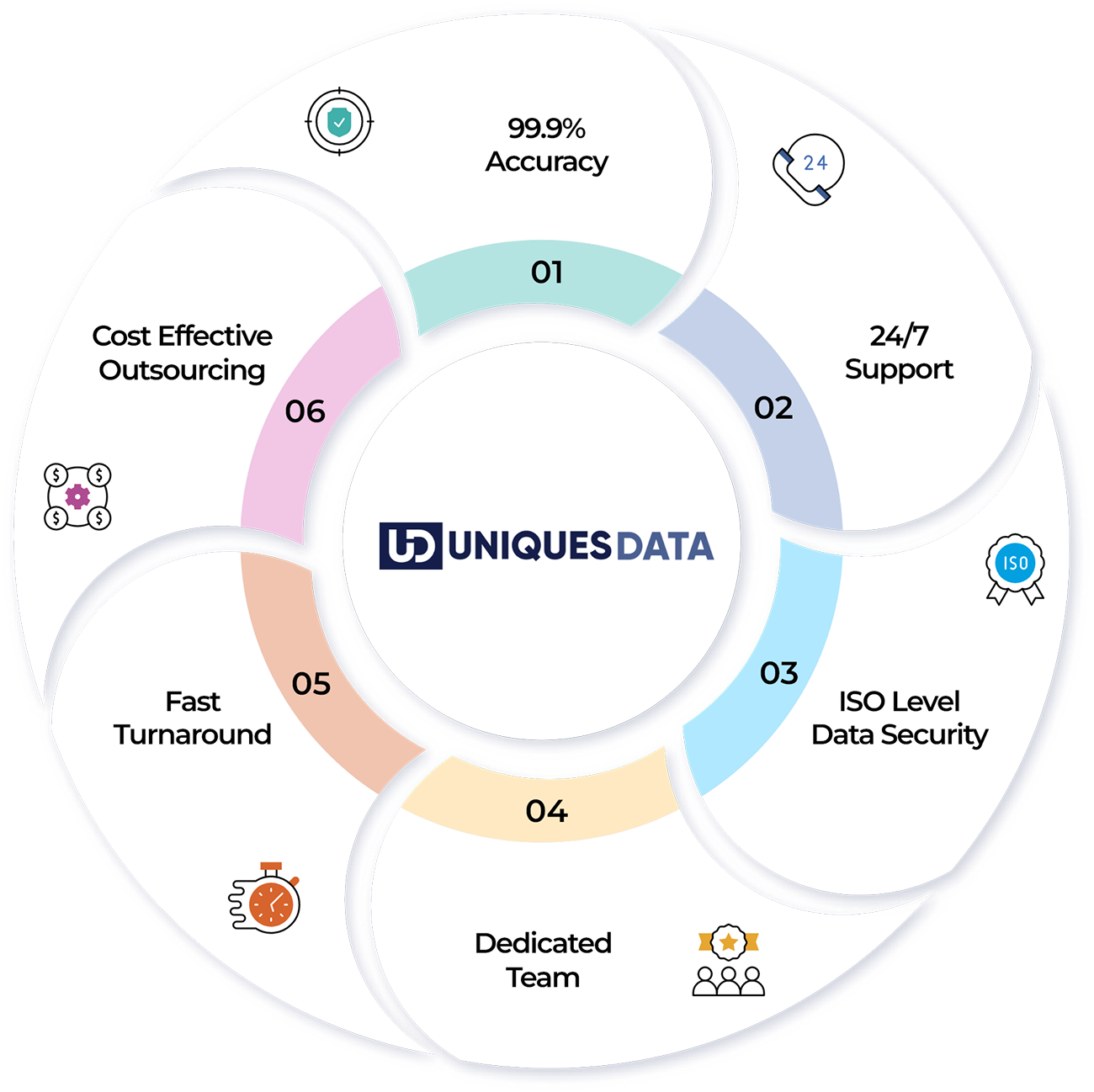

About Us

As a dynamic and client-centric data entry services provider based in India with a global perspective. UniquesData expertise lies in providing comprehensive data entry & data management services to numerous industry verticals since 2009. Our primary focus is converting complex data into simple and easily accessible forms with a variety of data management, data conversion and processing services.

High Quality Data Digitization Solutions

Comprehensive data solutions designed to accelerate your digital transformation journey

Data Analytics & BI Solutions

Data Analytics & BI solutions act as a master lens for your business for actionable visual stories. With advanced Power BI dashboards and predictive modeling, businesses predict what’s coming next in the market.

Learn More

Web Data Research & Scraping

We offer data scraping services and web research solutions that ease your sorting of data. It allow data to be systemized and available conveniently for leveraging valuable insights.

Learn More

Data Processing / Enhancement

Systematically organized data helps to save time in extracting information. UniquesData offers range of services for simplifying business operations and improve your digital database.

Learn More

Data Entry & Digitization

UniquesData offers reliable data digitization and data entry solutions for businesses and has an accurate digital database to extract relevant information.

Learn MoreElevate Your Enterprise with UniquesData Precision

UniquesData is a primary and renowned data management service provider with significant years of experience. Our skilled team, latest technological resources, best in class infrastructure and ability to manage large volumes of complex data. We ensure business thrives and excels in the dynamic market with precise use of digital databases. 15+ years of refining complexity into a clear set of databases. Partnering with UniquesData brings streamlined workflow and holistic business approach.

Industrial Expertise

Serving data management, data processing, data conversion and enrichment solutions to different verticals of the market. UniquesData aims to offer high quality, accurate and efficient digital databases for businesses to thrive in the market segment.

Healthcare

The healthcare industry collects and generates massive data, demanding sheer management to gain actionable insights. UniquesData provides a holistic approach for the healthcare industry to accurately and efficiently manage sensitive data of medical records.

Banking

Data helps the banking and finance industry stay up to date on current market scenarios, trends, and practices. UniquesData offers accurate data structure, secured database, and ease of access of information with security control.

Ecommerce

e-commerce business has resulted in more data production, evident for effective results. Ecommerce data digitization services help verify irrelevant content, resulting in clean data to drive actionable insights of market and customer behavior.

Insurance

Insurance digitization and processing services allows professionals to manage, store, and format the data uniformly for easy access, informed decisions and delivering customer friendly products.

Legal

Legal entity data management in law firms focuses on the core role, having well-organized data, and streamline daily operations. Data entry and digital conversion of legal documents enhances the security, making the access easier.

Logistics

Digitization of logistics documents and transportation back office support tasks decrease the operational cost. UniquesData professional data entry experts optimize the routes and manage the inventory digitally.

Education

Data collected from different platforms results in increased demands for education data entry. UniquesData brings proficiency in education data entry for streamlining the data management and its access for students and faculties.

Real estate

Managing different aspects of data is one of the head-scratching tasks for real estate professionals. UniquesData offers precision in real estate data digitization with a talented team and technology use.

Travel and Hospitality

UniquesData offers extensive customer data management for the travel and hospitality industry by a team of experienced professionals using cutting-edge technology at affordable pricing.

Some of Our Clients

Roadmap to Success

Transparency in Every Step for Accurate Outcomes

01

Project Analysis

Before a single byte is moved, you define the scope and requirements. This stage involves identifying data sources, defining end goals, and establishing technical specifications (formats, timelines, and compliance requirements). It’s the "blueprint" phase where potential roadblocks are identified.

02

Data Processing

This is a crucial phase. Raw data is cleaned, sorted, and transformed into a structured format. This may involve:

- Data Normalization

- Validation

- Aggregation

03

Quality Check

A rigorous audit is performed to ensure the processed data meets the standards set during Project Analysis. This often involves both automated scripts (to identify technical glitches) and manual sampling (to ensure contextual accuracy).

04

Final Delivery

The refined data is packaged and sent to the stakeholder in the requested format (e.g., CSV, SQL database, or via API). This step usually includes a Project Completion Report or documentation detailing the transformations applied, ensuring the user knows exactly how to utilize the final output.

Case Studies

File Conversion & Data Cleansing Services for 133K PDF Files in less than 65 Days boosting companies excellence

Extensive Data Analysis Services Improves Employee Productivity at Global Investment Management Firm

IT Firm Leveraging the Power of Image Annotation Services for AI-based Solutions

Helping Universities to Digitize Criminal Records by Scanned Image Data Entry Services

Client Reviews

Ready to Turn Your Raw Data Into Actionable Insights?

Streamline your operations and reduce overhead with our end-to-end data management solutions. Let’s build your data-driven future together.